In the past, like with other technologies, there were no legitimate concerns about the influence of robotics and artificial intelligence on society. Today, books, movies and TV series present different scenarios about what might happen to us should we lose control over the innovative machines we ourselves are creating.

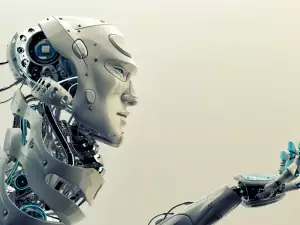

Scientists from the Georgia Institute of Technology, US, have reached new heights in robotics development. They have built a robot that can communicate in a human fashion.

The developed robot is called Simon and it is worlds apart from the traditional ones. So far, the approach toward the interaction between man and machine made it necessary to go through a series of communication roles.

To avoid this, the American scientists have equipped the new robots with 2 behavior models that are fundamentally different from one another.

The 1st model was simple - it listened to the person and stopped talking whenever it was interrupted. The 2nd, however, was more brash - it could interrupt the person speaking and even argue with them. This one was labeled as socially active.

The active Simon model was given independence in its physical activities. Plus, it could decide on its own when to use its verbal abilities. When it spoke with a human being, Simon looked him right in the eyes.

The experts conducted tests with the more energetic robot model in order to find out the attitudes it instilled in people.

When communicating with it, the human factor reacted quite negatively. Participants in the tests displayed an unwillingness to communicate with the robot since they saw it as incredibly egotistical. It often played the active role in the conversation, while the human was the passive element.

In tests with the more passive of the 2 robots, the people who had to communicate with it labeled it as unsociable and withdrawn. Participants spent more time trying to talk with it, always being the ones leading the conversation. Further, they shared that they liked the passive Simon more than the active one, who basically stole their function.